AI-slop-mastery

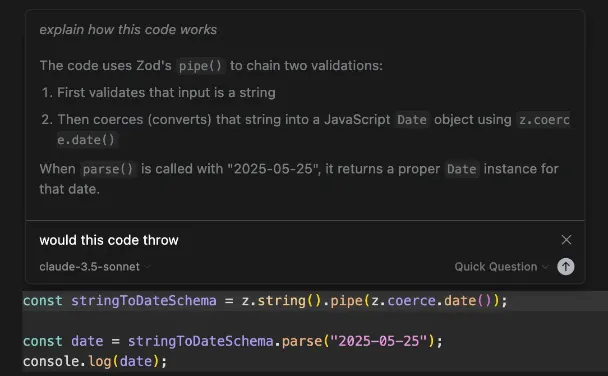

AI-assisted coding

The workflow

1) Context is king

- Problem: The more context you feed to your agent, the worse it performs. Keep context small.

- Solution: Use

/compactto compact and summarize your conversation history in claude code, or just prompt the AI to summarize the entire conversation history and put that into a markdown file, which you can then feed as context into a new chat.

2) Write E2E tests

End to end tests will give you the biggest bang for your buck.

3) Review every line of code

No excuses. If you didn't write it, then review it.

4) Abstract first

LLMs perform better when there is some sort of structure in your coding, for example, using TS or building abstractions in simple interfaces on top of third-party libraries will help the AI to understand your coding style, and it will build off of that.

5) Actually doing it

- Create a

features.mdto track features, describe them, and cross them off incrementally. - Always ask the agent to plan through solving a feature before implementing it.

- Always use a living document for features, saving progress you made on a feature and describing it so you can feed it as context even when starting a brand new convo.

Summary

-

Plan First: Never let the AI code without a

plan.md. Read the plan. If the plan is wrong, the code will be wrong. -

Give it Eyes (Harnesses): The AI cannot see the UI. Give it a

dry-runscript or anpm testso it can "see" if it broke something. -

Review is Mandatory: AI is not a replacement for knowing how to code. It is a replacement for typing. You must review every line (or use tools like Graphite/CodeRabbit for a second opinion).

Github Copilot

You can also use copilot on the web here:

Main use cases

- fix with copilot: You can highlight line(s), right click, and then use either modify with copilot or review with copilot to review the code, suggest any improvements, etc.

- regex: you can ask copilot to do regex for you

- generate commit messages for you: using the github GUI in vscode, you can commit AI-generated messages

Attaching context

You can attach context in the inline chat or in the chat sidebar by either manually adding files and images, or you can use these symbol prefixes to reference stuff in your codebase:

#: used to reference individual files, folders, symbols in your code (objects and types), content from the terminal, or an entire codebase@: used to reference different VSCode contexts, like@codebasefor your code,@terminalfor the terminal, or@workspacefor the current VSCode workspace. These are only available in the sidebar chat.

You can attach context in the inline chat by clicking CTRL + I twice to get a list of slash commands and available contexts.

Running terminal commands

With the chat sidebar, you can first type @terminal to give github copilot access to your terminal context, and then it will write a command to run based on the prompt.

You can also do CTRL + I in the terminal to popup an inline chat in the terminal to run commands there.

Slash commands

Copilot has a variety of slash commands that make doing monotonous tasks like documentation, fixing code, and creating unit tests much much easier. You can view a list of slash commands by clicking CTRL + I twice or by typing them manually in the chat sidebar.

Here are the most useful ones:

/explain: explains the selected code/fix: fixes the selected code/doc: creates documentation for the selected code, like JSdoc/tests: creates unit tests for the selected code

Github copilot CLI

The gh copilot CLI can be installed like so:

gh extension install github/gh-copilot

You can then use it to generate terminal commands:

gh copilot suggest "create a basic nextjs app"

Copilot Extensions

Copilot Extensions are 3rd party extensions that add additional context options with the @symbol to github copilot in your VSCode.

Go here for a list of all extensions

Here are the useful ones:

Agentic search

Go to the agentic search extensionin order to use agentic search capabilities, using cookies, etc.

prisma

Provides additional context for asking questions abotu prisma

neon db

Provides an additional context for asking questions about neon db.

Copilot instruction files

You can add copilot instruction files in the chat options, which apply to certain files or to all files. Think of these as a style guide and a way to let copilot know what your porject is about.

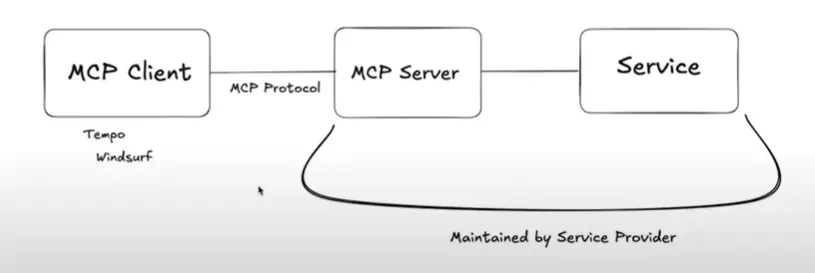

Enabling mcp

- Create a

.vscode/mcp.json - Specify mcp servers like so:

Cursor

Inline chat

The inline chat in cursor has several options for what you can do with it by first typing CTRL + K to bring up the inline chat, and then typing @ for context options.

You can also instead of asking it to generate or edit code, ask a quick question about it in the inline chat:

Adding context

You can add context with the @ symbol as a prefix.

@docs: adds documentation@web: tells cursor to do a web search@<filename>: adds the specific file as context

Adding docs

You can add certain websites' documentation to cursor, and cursor will index it and be able to reference it via the @docs context command. There are two ways to add documentation to certain websites you want:

- Add when prompted to add a new documentation when typing the

@docscommand - Add in the cursor features settings.

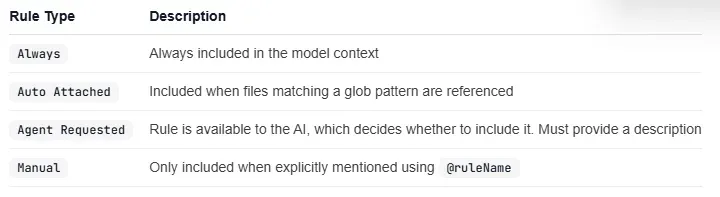

Cursor rules

Cursor rules are a new way to enforce coding style and give cursor additional context when you're chatting with it. There are 4 ways to create rules:

- Rules live in the

.cursor/rulesfolder in your workspace, and are single text.mdcfile. - You can also create a rule in the command palette in cursor

- You can ask cursor chat to create a rule for your project with the

/Generate cursor rulesslash command. - Go to cursor settings -> project settings -> and create rules.

Here are the 4 types of rules you can have:

Here is an example of a cursor mdc rule, where yu can add in additional file context as well with @ symbols.

Here is an example of a cursor mdc rule, where yu can add in additional file context as well with @ symbols.

---

description: RPC Service boilerplate

globs:

alwaysApply: false

---

- Use our internal RPC pattern when defining services

- Always use snake_case for service names.

@service-template.ts

You can get a list of reusable rules for each language that makes working on your codebase even better:

Antigravity

Intro

Open up the current folder in antigravity using the agy command

- implementation plans: WHen you're in planning mode you'll be able to create implementation plans and then you can even comment on those plans to have the AI implement your suggestions.

- inbox: when you click on the home screen icon, you're taking to a place where you can start a bunch of AI threads in parallel and they can even work on the same project.

COpilot CLI

The copilot command lets you pull up github copilot and use it like claude code. Everything there still applies.

Here are also some one-off prompts you can do:

copilot "create a bash script to check for uncommitted changes and push if clean"

CLI options

-p <prompt>: lets you do a one-off promp--allow-all-tools: gives copilot access to all tools. Maybe you want to run this in a dev container.

Here's a useful alias that lets you run a one-off prompt with all tools allowed

cpcli='copilot --allow-all-tools -p "$@"'

Then you can use like so:

cpcli "Explain each of these scripts and offer improvements"

Use cases and example prompts:

- Review the project README to make it easier for newcomers to understand

- What is taking up the most space on my own laptop?

Gemini CLI

CLI options

You can get just the text content of prompting an AI using the -p option, which can be useful for some quick prompting or even just running an AI without the SDK:

gemini -p "What is fine tuning?"

going YOLO mode

TO go yolo mode and accept all tool calls automatically, the first thing you'll want to do is to go into a sandbox and make sure nothing gets broken. You'll use these two commands:

--sandbox: runs in sandbox mode via a docker image--sandbox-image: optionally set the dockerhub iamge URL if you want the sandbox image to start from a different image.--yolo: sets yolo mode

However, yolo mode by default enters a sandbox, so you don't need that.

Thus the command to enter YOLO mode would look like so:

gemini --yolo

reference

--model <model_name>(-m <model_name>):- Specifies the Gemini model to use for this session.

- Example:

npm start -- --model gemini-1.5-pro-latest

--prompt <your_prompt>(-p <your_prompt>):- Used to pass a prompt directly to the command. This invokes Gemini CLI in a non-interactive mode.

--sandbox(-s):- Enables sandbox mode for this session.

--sandbox-image:- Sets the sandbox image URI.

--debug(-d):- Enables debug mode for this session, providing more verbose output.

--all-files(-a):- If set, recursively includes all files within the current directory as context for the prompt.

--help(or-h):- Displays help information about command-line arguments.

--show-memory-usage:- Displays the current memory usage.

--yolo:- Enables YOLO mode, which automatically approves all tool calls.

--telemetry:- Enables telemetry.

--telemetry-target:- Sets the telemetry target. See telemetry for more information.

--telemetry-otlp-endpoint:- Sets the OTLP endpoint for telemetry. See telemetry for more information.

--telemetry-log-prompts:- Enables logging of prompts for telemetry. See telemetry for more information.

--checkpointing:- Enables checkpointing.

--extensions <extension_name ...>(-e <extension_name ...>):- Specifies a list of extensions to use for the session. If not provided, all available extensions are used.

- Use the special term

gemini -e noneto disable all extensions. - Example:

gemini -e my-extension -e my-other-extension

--list-extensions(-l):- Lists all available extensions and exits.

--version:- Displays the version of the CLI.

Slash commands

-

/docs: brings up the Docs. -

/chat- Description: Save and resume conversation history for branching conversation state interactively, or resuming a previous state from a later session.

- Sub-commands:

save- Description: Saves the current conversation history. You must add a

<tag>for identifying the conversation state. - Usage:

/chat save <tag>

- Description: Saves the current conversation history. You must add a

resume- Description: Resumes a conversation from a previous save.

- Usage:

/chat resume <tag>

list- Description: Lists available tags for chat state resumption.

-

/clear- Description: Clear the terminal screen, including the visible session history and scrollback within the CLI. The underlying session data (for history recall) might be preserved depending on the exact implementation, but the visual display is cleared.

- Keyboard shortcut: Press Ctrl+L at any time to perform a clear action.

-

/compress- Description: Replace the entire chat context with a summary. This saves on tokens used for future tasks while retaining a high level summary of what has happened.

-

/editor- Description: Open a dialog for selecting supported editors.

-

/help(or/?)- Description: Display help information about the Gemini CLI, including available commands and their usage.

-

/mcp- Description: List configured Model Context Protocol (MCP) servers, their connection status, server details, and available tools.

- Sub-commands:

descordescriptions:- Description: Show detailed descriptions for MCP servers and tools.

nodescornodescriptions:- Description: Hide tool descriptions, showing only the tool names.

schema:- Description: Show the full JSON schema for the tool's configured parameters.

- Keyboard Shortcut: Press Ctrl+T at any time to toggle between showing and hiding tool descriptions.

-

/memory- Description: Manage the AI's instructional context (hierarchical memory loaded from

GEMINI.mdfiles). - Sub-commands:

add:- Description: Adds the following text to the AI's memory. Usage:

/memory add <text to remember>

- Description: Adds the following text to the AI's memory. Usage:

show:- Description: Display the full, concatenated content of the current hierarchical memory that has been loaded from all

GEMINI.mdfiles. This lets you inspect the instructional context being provided to the Gemini model.

- Description: Display the full, concatenated content of the current hierarchical memory that has been loaded from all

refresh:- Description: Reload the hierarchical instructional memory from all

GEMINI.mdfiles found in the configured locations (global, project/ancestors, and sub-directories). This command updates the model with the latestGEMINI.mdcontent.

- Description: Reload the hierarchical instructional memory from all

- Note: For more details on how

GEMINI.mdfiles contribute to hierarchical memory, see the CLI Configuration documentation.

- Description: Manage the AI's instructional context (hierarchical memory loaded from

-

/restore- Description: Restores the project files to the state they were in just before a tool was executed. This is particularly useful for undoing file edits made by a tool. If run without a tool call ID, it will list available checkpoints to restore from.

- Usage:

/restore [tool_call_id] - Note: Only available if the CLI is invoked with the

--checkpointingoption or configured via settings. See Checkpointing documentation for more details.

-

/stats- Description: Display detailed statistics for the current Gemini CLI session, including token usage, cached token savings (when available), and session duration. Note: Cached token information is only displayed when cached tokens are being used, which occurs with API key authentication but not with OAuth authentication at this time.

-

- Description: Open a dialog that lets you change the visual theme of Gemini CLI.

-

/auth- Description: Open a dialog that lets you change the authentication method.

-

/about- Description: Show version info. Please share this information when filing issues.

-

- Description: Display a list of tools that are currently available within Gemini CLI.

- Sub-commands:

descordescriptions:- Description: Show detailed descriptions of each tool, including each tool's name with its full description as provided to the model.

nodescornodescriptions:- Description: Hide tool descriptions, showing only the tool names.

-

/privacy- Description: Display the Privacy Notice and allow users to select whether they consent to the collection of their data for service improvement purposes.

-

/quit(or/exit)- Description: Exit Gemini CLI.

Memory

You should use a GEMINI.md file kind of the same way as a cursor rule - type it to be full of rules that the AI should listen to, like info about the project PRD and the tech stack.

You can use the /memory show slash command to view gemini's current memory in the current workspace. Since gemini has access to the memory tool, you can also tell it to update its memory, remove stuff from its memory, and that will lead to it having better responses.

Whenever you feel like memory is getting stale and the AI has lost the plot of your gemini rules in the GEMINI.md, you can always refeed it again and refresh the memory through this slash command:

/memory refresh

Adding files to context

You can refer to specific files in context using the @ prefix, which explicitly tells gemini to use file reading tools. By default, files and folders in your .gitignore are excluded from reading.

- Git-aware filtering: By default, git-ignored files (like

node_modules/,dist/,.env,.git/) are excluded. This behavior can be changed via thefileFilteringsettings.

Settings

Here is the complete documentation on how to configure your gemini CLI on the user level, system level, and project level.

All project level config lives inside the .gemini folder, and there are special files you can put in their that configure the behavior of the Gemini CLI.

settings.json

The settings.json file configures the CLI settings for the project, like enabling/disabling tools, adding MCP servers, etc.

- user level config: to set global gemini CLI settings, go to this path:

~/.gemini/settings.json - project level config: to set project level gemini CLI settings, create a

.gemini/settings.jsonfile in the cwd.

{

// -- UI & THEME SETTINGS --

// These settings control the look and feel of the Gemini CLI.

"theme": "GitHub",

// Sets the visual theme. "Default" is the standard theme.

// Check the documentation for other available themes.

"hideBanner": false,

// Set to true if you want to hide the ASCII art logo on startup.

"hideTips": false,

// Set to true to disable the helpful tips that appear in the UI.

// -- CONTEXT & MEMORY --

// Configure how the CLI understands the context of your project.

"contextFileName": "GEMINI.md",

// Specifies the file name for loading instructional context. The CLI

// searches for this file in the current directory, parent directories,

// and sub-directories to build a hierarchical context for the model.

// You can provide a single string or an array of strings (e.g., ["GEMINI.md", "CONTEXT.md"]).

// -- TOOL & COMMAND SETTINGS --

// Control how the model interacts with built-in and custom tools.

"autoAccept": false,

// If set to true, the CLI automatically executes tool calls that are

// considered (e.g., read-only operations) without asking for confirmation.

// This can speed up your workflow, but use it with caution.

"coreTools": [

"read_file",

"glob",

"run_shell_command(ls)",

"run_shell_command(cat)"

],

// Whitelists specific built-in tools for the model to use, enhancing security.

// If this setting is omitted, all core tools are available.

// You can also restrict shell commands to specific patterns, as shown above.

"excludeTools": [

"run_shell_command(rm -rf)"

],

// Blacklists specific tools or commands. This is less secure than `coreTools`.

// A tool listed in both `coreTools` and `excludeTools` will be excluded.

// Note: Command restrictions are based on simple string matching and can be bypassed.

// -- SANDBOXING FOR SECURITY --

// Isolate tool execution to protect your system.

"sandbox": "docker",

// Controls the sandboxing environment for executing tools.

// - "false" (default): No sandboxing.

// - "true" or "docker": Uses a pre-built Docker image for sandboxing.

// This is highly recommended when allowing the model to execute shell commands.

// -- FILE DISCOVERY --

// Define how the CLI finds files for @-mentions and other file operations.

"fileFiltering": {

"respectGitIgnore": true,

"enableRecursiveFileSearch": true

},

// "respectGitIgnore": When true, files and directories listed in your .gitignore

// (like node_modules/, dist/, .env) are automatically excluded.

// "enableRecursiveFileSearch": When true, allows recursively searching for

// files in subdirectories when you use the @ prefix in a prompt.

// -- CUSTOM TOOLS (ADVANCED) --

// For integrating your own project-specific tools.

"toolDiscoveryCommand": "bin/get_tools",

// A custom shell command that returns a JSON array of function declarations

// for your project's tools.

"toolCallCommand": "bin/call_tool",

// A custom shell command to execute a tool discovered via `toolDiscoveryCommand`.

// It receives the tool name as an argument and its parameters as JSON on stdin.

// -- TELEMETRY & USAGE STATISTICS --

// Help improve the Gemini CLI by sharing anonymous data.

"telemetry": {

"enabled": false,

"target": "local",

"otlpEndpoint": "http://localhost:4317",

"logPrompts": false

},

// Configures telemetry for debugging and monitoring.

// "enabled": Set to true to turn on telemetry.

// "target": Can be "local" or "gcp".

// "logPrompts": Set to true to include prompt content in logs (use with care for privacy).

"usageStatisticsEnabled": true,

// Set to false to opt-out of sending anonymized usage statistics.

// We don't collect any PII, prompt content, or file content. Disabling this

// just means we have less data to guide improvements.

// -- SESSION MANAGEMENT --

"maxSessionTurns": -1

// Sets the maximum number of conversation turns before a session is automatically reset.

// A "turn" consists of one user prompt and one model response.

// The default, -1, means the session is unlimited.

}

TIP

Note on environment variables in settings: String values within your settings.json files can reference environment variables using either $VAR_NAME or ${VAR_NAME} syntax. These variables will be automatically resolved when the settings are loaded. For example, if you have an environment variable MY_API_TOKEN, you could use it in settings.json like this: "apiKey": "$MY_API_TOKEN".

contextFileName(string or array of strings): a list of files to use as context or equivalent of cursor rules for the current gemini session.

By far the most important properties are the ones enabling and disabling tools, allowing you to get as granular as allowlisting certain commands or blacklisting them:

-

coreTools(array of strings):- Description: Allows you to specify a list of core tool names that should be made available to the model. This can be used to restrict the set of built-in tools. See Built-in Tools for a list of core tools. You can also specify command-specific restrictions for tools that support it, like the

ShellTool. For example,"coreTools": ["ShellTool(ls -l)"]will only allow thels -lcommand to be executed. - Default: All tools available for use by the Gemini model.

- Example:

"coreTools": ["ReadFileTool", "GlobTool", "ShellTool(ls)"].

- Description: Allows you to specify a list of core tool names that should be made available to the model. This can be used to restrict the set of built-in tools. See Built-in Tools for a list of core tools. You can also specify command-specific restrictions for tools that support it, like the

-

excludeTools(array of strings):- Description: Allows you to specify a list of core tool names that should be excluded from the model. A tool listed in both

excludeToolsandcoreToolsis excluded. You can also specify command-specific restrictions for tools that support it, like theShellTool. For example,"excludeTools": ["ShellTool(rm -rf)"]will block therm -rfcommand. - Default: No tools excluded.

- Example:

"excludeTools": ["run_shell_command", "findFiles"]. - Security Note: Command-specific restrictions in

excludeToolsforrun_shell_commandare based on simple string matching and can be easily bypassed. This feature is not a security mechanism and should not be relied upon to safely execute untrusted code. It is recommended to usecoreToolsto explicitly select commands that can be executed.

- Description: Allows you to specify a list of core tool names that should be excluded from the model. A tool listed in both

-

autoAccept(boolean):- Description: Controls whether the CLI automatically accepts and executes tool calls that are considered safe (e.g., read-only operations) without explicit user confirmation. If set to

true, the CLI will bypass the confirmation prompt for tools deemed safe. - Default:

false - Example:

"autoAccept": truesandbox(boolean or string):

- Description: Controls whether the CLI automatically accepts and executes tool calls that are considered safe (e.g., read-only operations) without explicit user confirmation. If set to

-

Description: Controls whether and how to use sandboxing for tool execution. If set to

true, Gemini CLI uses a pre-builtgemini-cli-sandboxDocker image. For more information, see Sandboxing. -

Default:

false -

Example:

"sandbox": "docker

Enabling MCP servers

To enable MCP servers per project, you can add them through the mcpServers key like so:

"mcpServers": {

"myPythonServer": {

"command": "python",

"args": ["mcp_server.py", "--port", "8080"],

"cwd": "./mcp_tools/python",

"timeout": 5000

},

"myNodeServer": {

"command": "node",

"args": ["mcp_server.js"],

"cwd": "./mcp_tools/node"

},

"myDockerServer": {

"command": "docker",

"args": ["run", "-i", "--rm", "-e", "API_KEY", "ghcr.io/foo/bar"],

"env": {

"API_KEY": "$MY_API_TOKEN"

}

}

}

Blocking tool use

You can restrict the shell commands that can be executed by the run_shell_command tool by using the tools.core and tools.exclude settings in your config file:

tools.core: Specifies an allowlist of commands.tools.exclude: Specifies a blocklist of commands. The blocklist takes precedence over the allowlist.

Here is an example config that allows git commands but blocks git push commands:

{

// ...

"tools": {

"core": [ "run_shell_command(git)" ],

"exclude": [ "run_shell_command(git push)" ]

}

// ...

}

Using tools

You can use tools automatically with gemini through just its automatic tool selection capability, but you can also manually invoke them, which may be useful, by just invoking these bash methods:

web fetch

web_fetch(

prompt="Can you summarize the main points of https://example.com/news/latest"

)

google search

google_web_search(query="Your query goes here.")

Sandboxing

There are three different ways to sandbox a one-off gemini CLI run or a TUI session into an isolated docker container:

- Run with the

--sandboxoption - Export the

GEMINI_SANDBOX=trueenv var into the current shell session - Set the

tools.sandboxkey in thesettings.jsonto"docker".

# Enable sandboxing with a command-line flag

gemini --sandbox --prompt "run the test suite"

# Use environment variable

export GEMINI_SANDBOX=true

gemini --prompt-interactive "explain this code"

# Configure in settings.json

{

"tools": {

"sandbox": "docker"

}

}

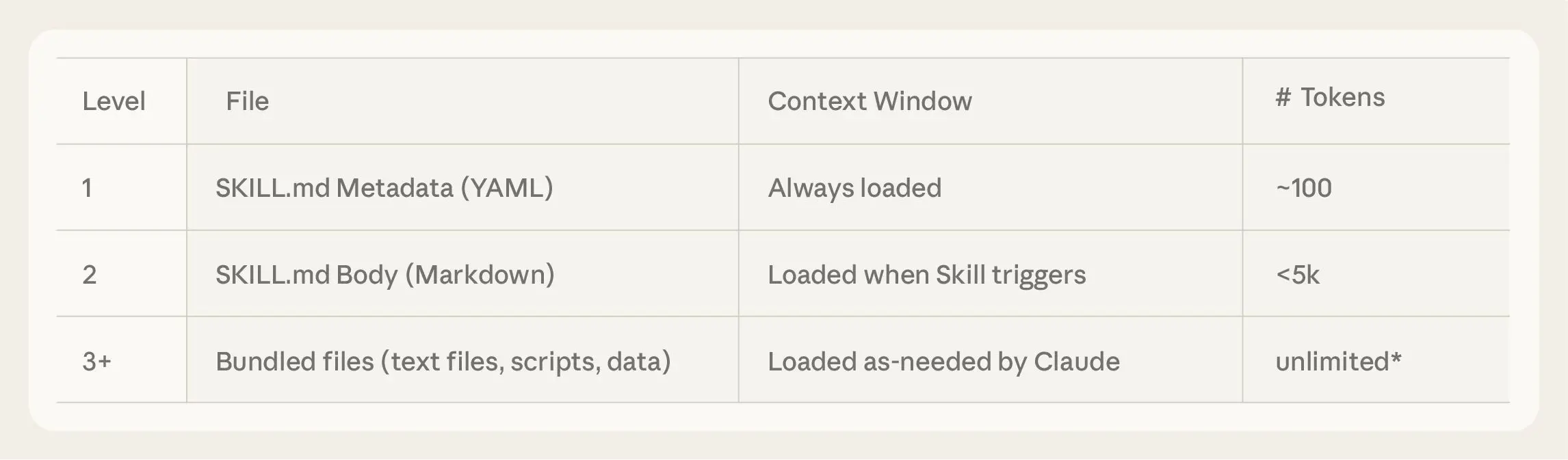

Agent skills

You can add custom agent skills to gemini, which gemini will recognize in a .agents/skills folder or the .gemini/skills folder.

To enable globally available skills and have them be automatically recognized, put them in the ~/.agents/skills folder.

CLI

gemini skills list: lists all active skills

Here's the complete way to use the cli:

# List all discovered skills

gemini skills list

# Link agent skills from a local directory via symlink

# Discovers skills (SKILL.md or */SKILL.md) and creates symlinks in ~/.gemini/skills

# (or ~/.agents/skills)

gemini skills link /path/to/my-skills-repo

# Link to the workspace scope (.gemini/skills or .agents/skills)

gemini skills link /path/to/my-skills-repo --scope workspace

# Install a skill from a Git repository, local directory, or zipped skill file (.skill)

# Uses the user scope by default (~/.gemini/skills or ~/.agents/skills)

gemini skills install https://github.com/user/repo.git

gemini skills install /path/to/local/skill

gemini skills install /path/to/local/my-expertise.skill

# Install a specific skill from a monorepo or subdirectory using --path

gemini skills install https://github.com/my-org/my-skills.git --path skills/frontend-design

# Install to the workspace scope (.gemini/skills or .agents/skills)

gemini skills install /path/to/skill --scope workspace

# Uninstall a skill by name

gemini skills uninstall my-expertise --scope workspace

# Enable a skill (globally)

gemini skills enable my-expertise

# Disable a skill. Can use --scope to specify workspace or user (defaults to workspace)

gemini skills disable my-expertise --scope workspace

Plan mode

You can have gemini follow a plan by just telling it to plan out a feature:

You can also use the /plan command to manually trigger plan mode, and then send messages mid-conversation to steer the agent in the right direction.

MCP

You can enable mcp servers in the gemini settings JSON:

- Open

~/.gemini/settings.json(or the project-specific.gemini/settings.json). - Add the

mcpServersblock. This tells Gemini: “Run this docker container and talk to it.”

{

"mcpServers": {

"github": {

"command": "docker",

"args": [

"run",

"-i",

"--rm",

"-e",

"GITHUB_PERSONAL_ACCESS_TOKEN",

"ghcr.io/github/github-mcp-server:latest"

],

"env": {

"GITHUB_PERSONAL_ACCESS_TOKEN": "${GITHUB_PERSONAL_ACCESS_TOKEN}"

}

}

}

}

TIP

TO avoid hardcoding secrets into the settings JSON, you can first export any secrets as an environment variable into the current session and then you can interpolate via ${} syntax in the JSON file.

To verify connections, you can use the /mcp command:

/mcp list: lists all currently active MCP servers/mcp reload: reloads the MCP server

Claude code

CLAUDE.md

The CLAUDE.md file is based on three facts about LLM agents:

- Coding agents know absolutely nothing about your codebase at the beginning of each session.

- The agent must be told anything that's important to know about your codebase each time you start a session.

CLAUDE.mdis the preferred way of doing this.

This file should clarify three questions:

- WHAT: tell Claude about the tech, your stack, the project structure. Give Claude a map of the codebase. This is especially important in monorepos! Tell Claude what the apps are, what the shared packages are, and what everything is for so that it knows where to look for things

- WHY: tell Claude the purpose of the project and what everything is doing in the repository. What are the purpose and function of the different parts of the project?

- HOW: tell Claude how it should work on the project. For example, do you use

buninstead ofnode? You want to include all the information it needs to actually do meaningful work on the project. How can Claude verify Claude's changes? How can it run tests, typechecks, and compilation steps?

To write a good CLAUDE.md file, we should follow these core principles:

CLAUDE.mdis for onboarding Claude into your codebase. It should define your project's WHY, WHAT, and HOW.- Less (instructions) is more. While you shouldn't omit necessary instructions, you should include as few instructions as reasonably possible in the file.

- Keep the contents of your

CLAUDE.mdconcise and universally applicable. - Use Progressive Disclosure - don't tell Claude all the information you could possibly want it to know. Rather, tell it how to find important information so that it can find and use it, but only when it needs to to avoid bloating your context window or instruction count. Also, don't embed files directly with

@, as that bloats the context. Just reference the file. - Claude is not a linter. Use linters and code formatters, and use other features like Hooks and Slash Commands as necessary.

CLAUDE.mdis the highest leverage point of the harness, so avoid auto-generating it. You should carefully craft its contents for best results.

ANother 4 principles:

-

Start with Guardrails, Not a Manual. Your

CLAUDE.mdshould start small, documenting based on what Claude is getting wrong. -

Don’t

@-File Docs. If you have extensive documentation elsewhere, it’s tempting to@-mention those files in yourCLAUDE.md. This bloats the context window by embedding the entire file on every run. But if you just mention the path, Claude will often ignore it. You have to pitch the agent on why and when to read the file. “For complex … usage or if you encounter aFooBarError, seepath/to/docs.mdfor advanced troubleshooting steps.” -

Don’t Just Say “Never.” Avoid negative-only constraints like “Never use the

--foo-barflag.” The agent will get stuck when it thinks it must use that flag. Always provide an alternative. -

Use

CLAUDE.mdas a Forcing Function. If your CLI commands are complex and verbose, don’t write paragraphs of documentation to explain them. That’s patching a human problem. Instead, write a simple bash wrapper with a clear, intuitive API and document that. Keeping yourCLAUDE.mdas short as possible is a fantastic forcing function for simplifying your codebase and internal tooling.

principle 1 - Keep your claude md small

As instruction count increases, instruction-following quality decreases uniformly. This means that as you give the LLM more instructions, it doesn't simply ignore the newer ("further down in the file") instructions - it begins to ignore all of them uniformly

This implies that your CLAUDE.md file should contain as few instructions as possible - ideally only ones which are universally applicable to your task.

TIP

Aim for a CLAUDE.md less than 60 lines long

principle 2 - use progressive disclosure

The term Progressive disclosure is just a fancy way of saying to reference different markdown files inside your CLAUDE.md file and then give brief descriptions of those files so that Claude can decide whether or not to read those markdown files.

However, referencing files directly with the @ prefix is NOT progressive disclosure, as that just completely embeds the file content into the context.

Rather, in the CLAUDE.md, to implement progressive disclosure, just reference the filepath and describe what that file does, and claude will decide whether or not to look at that md file.

CLI options

claude -p <prompt>: runs a one-off promptclaude --model=<model>: runs claude with a specific model. Here are the different values you can pass for the--modelparameter:sonnetopushaiku

claude --continue: continue off from the last sessionclaude --resume: resume a specific session.

Keyboard shortcuts

- auto accept mode: TO enter auto accept mode for edits, press

shift + tabkeyboard shortcut - plan mode: TO enter plan mode, press

shift + tabtwice

Slash options

When inside a conversation with claude code, you have access to these special slash commands:

/model <model>: change the model mid convo to one ofhaiku,sonnet, oropus/compact: compacts previous conversation history into a summary. Useful when you've now moved on to a different task./clear: clears the conversation history/init: reads the current codebase and based off that, creates aCLAUDE.mdfile/context: visualize the current context and how much of it is taken up./status: shows current token and session info/review: performs a code review/security-review: performs a code review that searches for security flaws./install-github-app: allows you to add claude as a collaborator to a github repoi so you can assign it issues and to pull requests

Managing context

Hit the esc key twice to stop a response while Claude is generating. Then you can start a user query by prefixing with a # to start a memory which claude code will remember during the conversation.

Also use these slash commands to manage memory:

/clear: clears the conversation history/compact: compacts previous conversation history into a summary. Useful when you've now moved on to a different task.

Commands

Commands are special markdown files that must live within the .claude/commands folder, and can be used as custom slash commands.

- For example, a

.claude/commands/goal.mdcan be invoked via the/goalslash command, and it acts like a really big prompt to claude, giving it all the markdown content.

NOTE

The main use case of commands is to prompt for repetitive tasks like linting, testing, or adding documentation. You can also do neat stuff like dynamically add arguments and interpolate bash commands in these markdown files.

Skills / Plugins

You can install MCP servers and skills as "plugins" in claude code.

- List all skills claude has access to with

/skillscommand. - Manage plugins (install and delete) by using the

/pluginscommand

To add custom skills to claude code, they should be SKILL.md files within the .claude/skills folder

Hooks

Claude hooks are bash commands that run at different lifecycle moments such as session start, pre compact, and on stop. Key moments include startup, resume, clear, and various tool use stages like pre tool use and post tool use.

NOTE

You can check all registered hooks with the /hooks command.

If you want to create a claude command that can easily create hooks for you, use this command:

Here is a typescript SDK for creating claude commands:

You can specify the events to listen to and a file to run on those events, and you do all this from a json file. These are the lifecycle hooks you can listen for:

- PreToolUse: This hook runs before a tool (like

edit_fileorBash) is executed. It is the most powerful point of control for preventative measures and is the only event that can proactively block a tool’s execution. - PostToolUse: This hook runs after a tool has successfully completed. It’s ideal for reactive tasks like automatic formatting, running tests, or logging. It cannot block execution but can provide feedback to Claude.

- Notification: This hook triggers whenever Claude Code sends a notification to the user, for example, when it’s waiting for input or has completed a long task. It is purely informational and cannot block execution.

- Stop: This hook runs when the main Claude Code agent finishes responding. It can be configured to prevent the agent from terminating, forcing it to continue working until a specific condition is met.

- SubagentStop: This hook runs when a sub-agent task completes its work. Like the

Stophook, it can block the sub-agent from stopping.

You specify hooks in JSON in the .claude/settings.local.json under the "hooks" key:

"matcher": the tools to match on"hooks": the files to run when matched

{

"hooks": {

"PreToolUse": [

{

"matcher": "Bash",

"hooks": [

{

"type": "command",

"command": "jq -r '\\(.tool_input.command) - \\(.tool_input.description // \"No description\")' >> ~/.claude/bash-command-log.txt"

}

]

}

]

}

}

Hooks receive JSON data via standard input (stdin) that provides session information and event-specific data, such as session_id, transcript_path, and tool_name.

They communicate status back to Claude Code primarily through shell exit codes and, for more advanced control, structured JSON output to stdout.

- Exit Code 0: Indicates success. Any output to stdout is shown to the user in the transcript, but not to the model.

- Exit Code 2: Signals a blocking error. This tells Claude Code to halt the current action (for

PreToolUsehooks) and processes the feedback fromstderras new input for Claude to understand the error and adjust its plan. It is crucial that error messages for blocking errors are sent tostderr. - Other Non-Zero Exit Codes: Indicate a non-blocking error. The hook failed, but execution continues. The error message from

stderris shown to the user, but not to Claude.

NOTE

This means since hooks provide parameters in a deterministic format, we can programatically do stuff with those inputs in another program, like a python or bash script.

For more examples on how to use hooks, look here:

custom hook: deny dangerous commands

This hook is used to deny dangerous commands like rm -rf or curling to a non HTTPS string.

"hooks": {

"PreToolUse": [

{

"matcher": "Bash",

"hooks": [

{

"type": "command",

"command": ".claude/hooks/pre-bash-firewall.sh"

}

]

}

]

}

#!/usr/bin/env bash

set -euo pipefail

# stdin: JSON with .tool_input.command

cmd=$(jq -r '.tool_input.command // ""')

# Block list (add as needed)

deny_patterns=(

'rm\s+-rf\s+/'

'git\s+reset\s+--hard'

'curl\s+http'

)

for pat in "${deny_patterns[@]}"; do

if echo "$cmd" | grep -Eiq "$pat"; then

echo "Blocked command: matches denied pattern '$pat'. Use a safer alternative or explain why it's necessary." 1>&2

exit 2

fi

done

exit 0

custom hook: write bash commands to a log

#!/usr/bin/env bash

set -euo pipefail

cmd=$(jq -r '.tool_input.command // ""')

printf '%s %s\n' "$(date -Is)" "$cmd" >> .claude/bash-commands.log

exit 0

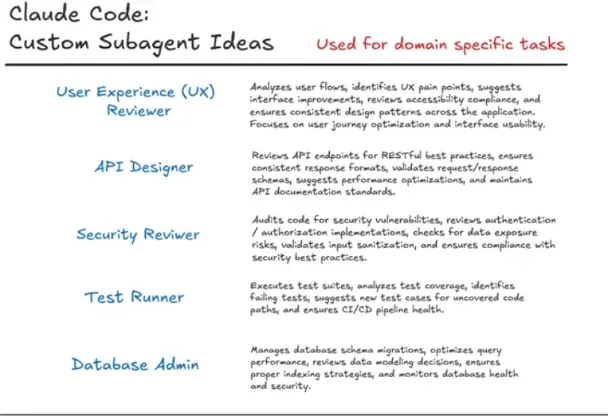

Subagents

Subagents in claude are just several different agents each with their own system prompt and context window.

You can create subagents with the /agents command, and the agent specification is like so:

- storage: agents are stored as markdown files in the

.claude/agentsfolder in your project - tools: You can specify which tools the agent has access to.

You can also set agents on the global level:

| Type | Location | Scope | Priority |

|---|---|---|---|

| Project | .claude/agents/ | Available only in the current project | Highest |

| User | ~/.claude/agents/ | Available across all your projects | Lower |

Here is an example of the different types of subagent personalities you can create.

subagents in claude code skills

You can specify skills that a subagent can access, as they don't inherit skills from the parent.

# .claude/agents/code-reviewer/AGENT.md

---

name: code-reviewer

description: Review code for quality and best practices

skills: pr-review, security-check

---

Here's another example:

---

name: your-sub-agent-name

description: A clear description of when this sub agent should be used.

tools: tool1, tool2 # Optional - inherits all tools from the main agent if omitted.

---

Your sub agent's system prompt goes here.

This section should clearly define the sub agent's role, capabilities, personality, and approach to solving problems. Include specific instructions, best practices, and any constraints the sub agent should follow.

| Field | Required | Description |

|---|---|---|

name | Yes | A unique identifier for the agent, using lowercase letters and hyphens. |

description | Yes | A natural language description of the agent’s purpose, used by Claude for automatic delegation. |

tools | No | A comma-separated list of specific tools the agent can use. If omitted, it inherits all tools from the main agent, including any connected via MCP servers. |

skills | No | A command-separated list of skill names the agent can have access to. |

Git worktrees

Git worktrees are especially useful when running several agents in parallel.

Techniques and strategies

forcing thinking

To force thinking, you can use these keywords in your prompt:

- "think": reasoning up to 4000 tokens

- "think harder": reasoning up to 10000 tokens

switch models smartly

Use opus for planning, sonnet for execution.

Use plan mode

You can tell claude to "make the plan multi-phase" which makes the plan, well, multi-phase.

Process - Claude code planning workflow

-

Start each task with a plan file

-

Create or designate a

plans/folder in your repo (e.g.plans/feature-query-builder.md). -

Ask the AI to write a plan into that file, not to write code yet.

-

-

Prompt the AI to draft the plan

Use a prompt along the lines of- “Here is the feature I want. Create a detailed implementation plan and write it into

plans/feature-X.md. Include: restated requirements, architecture, file-level changes, pseudo-code, and test/lint/type-check commands.”

- “Here is the feature I want. Create a detailed implementation plan and write it into

-

Review and edit the plan with the AI

-

Read the plan and comment like you would on a junior engineer’s design doc (e.g. “route naming is off”, “missing auth checks”, “doesn’t match existing patterns”).

-

Ask the AI to revise the plan until it matches how you actually want to build the feature.

-

-

Implement strictly from the plan

-

Once happy, say: “Now follow the plan in

plans/feature-X.mdand implement the changes step by step.” -

When things change, first update the plan file, then implement according to the updated plan.

-

-

Keep the plan as a living document

-

Whenever tests fail or requirements shift, tell the AI: “Update

plans/feature-X.mdto reflect what we’ve learned, then adjust the implementation.” -

For new related features, point the AI at existing plan files so it keeps architecture consistent.

-

Living document feature: save to github issue.

Ask Claude to save the current plan state to a GitHub Issue before clearing the context.

Step A: Save State

"Make a GitHub issue containing the current plan, checking off the items we have already completed."

Claude runs gh issue create automatically.

Step B: Reset & Resume

/clear "Get GitHub issue #24 and enact Phase 4 of that plan."

Claude reads the issue from GitHub, sees where it left off, and resumes work with a fresh context window.

Claude on your PRs

Run the /install-github-app to install a claude code github action.

- By default, the Claude Code GitHub Action listens for comments or issues mentioning

@claude.

This actions makes claude become a collaborator on your PRs for the current repo. You can now tag claude on issues, make it an assignee, etc.

Claude config

The claude config file lives here:

- global:

~/.claude/settings.json - local:

.claude/settings.local.json

allowed tools

At the project or global level, you can set which tools claude does and doesn't need permission for:

{

"permissions": {

"allow": [

"WebSearch",

"WebFetch"

"Bash(git add:*)"

],

"deny": [],

"ask": []

}

}

MCP

You can add MCP config in a .mcp.json in the current directory, which claude can access and load the MCP servers from.

It should be in this format:

{

"mcpServers": {

"github": {

"type": "http",

"url": "https://api.githubcopilot.com/mcp/",

"headers": {

"Authorization": "Bearer <ACCESS_TOKEN_HERE>"

}

}

}

}

Here are some important things to keep in mind:

- The

typeproperty is required in MCP configuration with claude, and should be one of these three types:"http": HTTP transport server"stdio": locally running STDIO transport server"sse": SSE transport server

The access token must be valid and scoped correctly, having at least the repo permissions.

Here's an example of my favoriute MCP setuo:

"mcpServers": {

"playwright": {

"type": "stdio",

"command": "npx",

"args": [

"@playwright/mcp@latest"

],

"env": {}

},

"context7": {

"type": "http",

"url": "https://mcp.context7.com/mcp",

"headers": {

"CONTEXT7_API_KEY": "apikeyhere"

}

}

},

Claude Cowork

Scheduled tasks

Run scheduled tasks by using the /schedule command.

CodeRabbit CLI

coderabbit: runs a normal code review sessioncoderabbit --plain: runs a normal code review session

Codex

# Interactive: start a session with a prompt

codex "Refactor utils/logger to remove dead code"

# Non-interactive: run once and print the result

codex exec "Generate a README outline for a Flask API"

# Choose a model

codex -m o3 "Summarize the contribution guidelines"

# Use local OSS provider (expects a local Ollama server)

codex --oss "Suggest performance improvements for this script"

# Attach one or more images to the initial prompt

codex -i screenshots/login.png -i screenshots/dashboard.png \

"Describe accessibility issues in these screens and fix React code"

Core Concepts

- Prompt: Optional initial message you provide on invocation. If omitted, Codex opens the interactive CLI waiting for your input.

- Models (

-m): Select the model to use.- Works with configured providers;

--ossis a convenience flag to target a local open-source provider (verifies a local Ollama server).

- Images (

-i): Attach one or more files to give visual context (e.g., UI screenshots) to the first turn. - Config override (

-c key=value): Override any config key at runtime. Values parse as JSON when possible; otherwise treated as strings. Examples:-c model="o3"-c 'sandbox_permissions=["disk-full-read-access"]'-c shell_environment_policy.inherit=all

- Sandbox (

-s): Controls what Codex-generated commands can do when executed.read-only: Only safe read operations (e.g.,ls,cat,sed).workspace-write: Can modify files inside the workspace.danger-full-access: No filesystem sandboxing. Use with extreme care.

- Approval policy (

-a): When Codex asks before executing commands.untrusted: Run only trusted read commands automatically; ask for others.on-failure: Run all commands; ask only if one fails and needs escalation.on-request: Codex decides when to ask (balanced default for many cases).never: Never ask; failures are returned immediately.

codex -a untrusted -s workspace-write

Interactive session (default)

# With an initial prompt

codex "Add pagination to /api/posts, include tests"

# No prompt: open the interactive TUI and chat

codex

Use the session to iterate on code, ask Codex to propose patches, run tests, and refine outputs. Combine with sandbox + approvals to keep changes safe.

exec — one-shot non-interactive

codex exec "Write a bash script that cleans old build artifacts safely"

# Pipe output directly to a file

codex exec "Create a .gitignore for a Rust workspace" > .gitignore

# With images

codex exec -i docs/wireframe.png "Generate a responsive HTML/CSS layout"

apply — apply the latest diff from Codex

When Codex proposes edits, it often produces a patch. Apply it directly to your working tree:

# After Codex proposes a patch in the session

codex apply

Under the hood this runs a git apply of the last diff the agent produced. Ensu

re you’re in a clean repo or understand what will change before applying.

Practical Workflows & Use Cases

- Code edits in a repo: Ask Codex to modify specific files/functions; review the

proposed patch; run

codex applyto commit changes locally. - Bug triage and fixes: Provide failing test output; Codex pinpoints the issue, proposes a change, and helps validate the fix.

- Documentation & READMEs: Generate outlines or complete docs via

codex execa nd refine interactively. - Refactors: Guide multi-file refactors in steps; use sandboxed execution and ap provals to run formatters and tests.

- UI reviews: Attach screenshots with

-iso Codex can suggest accessibility im provements and produce code updates. - OSS/local models: Use

--ossto target a local provider when network-limited or to keep data local.

Here are some examples

- Fix a bug interactively and apply

codex -s workspace-write -a on-request \

"Investigate failing test in tests/test_parser.py and fix the parser"

# In the session, ask Codex to run tests, identify the failure, propose a patch.

# Then apply the patch locally:

codex apply

- Generate a README non-interactively

codex exec -m o3 \

"Write a comprehensive README for a RESTful Flask service; include setup, run,

env, testing"

- Attach images for context

codex -i screenshots/mobile-home.png -i screenshots/mobile-detail.png \

"Spot a11y issues and provide React Native fixes"

- Override configuration at runtime

# Switch model and expand permissions for the current run only

codex -c model="o3" -c 'sandbox_permissions=["disk-full-read-access"]' \

"Profile code hotspots and propose optimizations"

# Inherit full shell environment (example nested key override)

codex -c shell_environment_policy.inherit=all \

"Use my local toolchain to compile and test"

- Tune sandbox and approvals

# Conservative: read-only + untrusted (ask for anything risky)

codex -s read-only -a untrusted "List flaky tests and analyze logs"

# Productive middle ground: workspace writes allowed; ask when needed

codex -s workspace-write -a on-request \

"Refactor utils/date and update usage sites"

# Power mode: bypass everything (only in safe, sandboxed environments)

codex --dangerously-bypass-approvals-and-sandbox \

"Bulk-apply code style fixes across the repo"

Tips & Best Practices

- Be explicit: Include filenames, functions, and constraints in prompts.

- Iterate: Start broad, then refine with follow-ups or

execreruns. - Review diffs: Read proposed patches before

codex apply. - Keep changes scoped: Smaller prompts produce clearer, safer edits.

- Use profiles: Store your defaults in

~/.codex/config.tomland reference them with-p. - Treat

danger-full-accessand--dangerously-bypass-approvals-and-sandboxwi th care; preferworkspace-write+on-requestfor day-to-day work.

Vibe coding mastery

tech stack

The "gooner tech stack" as I like to call it helps with vibe coding and consists of NextJS, tailwind, typescript, supabase, shadcn.

Workflow

- Tell chat about your idea and ask it to make a PRD (project requirements document) so that you can input it into cursor.

- Ask chat to convert the PRD into a prompt for building with cursor or replit

- Copy a standard cursor rules for nextjs, tyepscript, react, enable it for project.

- Paste in your PRD into cursor and ask it to create a landing page for you. You can also paste in wireframes or mockups of what you want the UI to look like.

- Once the code is built, ask cursor to explain the file structure and what each file in the codebase does so you can understand it better.

must have workflow techniques

- have good git hygeine: commit consistently once the AI changes something so you can easily roll back.

Creating a PRD

A PRD should have the structure of having a high-level overview for the product, what it's about, and the tech stack that will be used in it.

Then you break up the PRD into milestones, where each milestone defines a technical objective to complete and the technology that will be used to complete it.

Here is a prompt that makes any AI a PRD master:

You are a software engineering designer that excels at creating PRDs for web apps that will then be generated with AI. Your task is to create a PRD for <insert app idea>

After creating the PRD, ask the AI to give a prompt that implements the PRD:

create a ready-to-generate prompt for building this app with AI tools like GPT or a working code scaffold.

Ui first creation

You can start off creating the UI simply with HTML and tailwindcss, which cursor excels at. Then based off that UI, you can tell cursor to make a plan (essential step) and then implement it peacemeal:

step 1: UI creation

Use this prompt as a cursor rule to build gorgeous UIs, and paste in mockups for inspiration.

## Role

You are a senior front-end developer.

## Design Style

- A perfect balance between elegant minimalism and functional design.

- Soft, refreshing gradient colors that seamlessly integrate with the brand palette.

- Well-proportioned white space for a clean layout.

- Light and immersive user experience.

- Clear information hierarchy using subtle shadows and modular card layouts.

- Natural focus on core functionalities.

- Refined rounded corners.

- Delicate micro-interactions.

- Comfortable visual proportions.

## Mobile UI isntructions

- **Page Size and Outlines**: Each page should be 375x812 pixels, with outlines to simulate a mobile device frame.

- **Icons**: Use an online vector icon library, ensuring that icons do not have background blocks, baseplates, or outer frames.

- **Images**: Images must be sourced from open-source image websites and linked directly.

- **Styles**: Utilize Tailwind CSS via CDN for styling purposes.

- **Status Bar**: Do not display the status bar, including time, signal, and other system indicators.

- **Non-Mobile Elements**: Avoid displaying non-mobile elements such as scrollbars.

- **Text Color**: All text should be either black or white.

## Task

This is an **AI Calorie calculator app** where users can take pic of food and auto extract nutrition**.

- Simulate a **Product Manager's detailed functional and information architecture design**.

- Follow the **design style** and **technical specifications** to generate a complete **UI design plan**.

- Create a **UI.html** file that contains all pages displayed in a **horizontal layout**.

- Generate the **first two pages** now

step 2: ascii layouts

Tp figure out how to go about for the rest of the pages, you can ask cursor to brainstorm ascii layouts for you of what the page should look like, which consumes less tokens and is easier for the AI model to iterate on.

step 3: creating a theme

Go to the Beautiful themes for shadcn/ui — tweakcn | Theme Editor & Generator site to create your custom shadcn theme, paste it in your code, and then ask cursor if it understands your theme and ask it to display it for you (adds it to context)

step 4: adding animations

Tell the model which types of animations you would like to do. You can just copy this prompt:

Add smooth animations and micro interactions like:

- smooth hover effects

- gentle tilt effects

- scroll-based animations

- animated glitch-style

- inertia-based scroll

Vibe coding prompts

Here are some good vibe coding prompts to inject during your workflow:

- responsive: Tell the AI to "make the app responsive and mobile-friendly"

- good UX: Tell the AI to improve UX to make the app simpler and more visual, while keeping all current functionality.

Workflow for projects

The main bulk of a vibe-coding based workflow hinges on 4 cursor rules files you should define:

1. Coding Preferences – "Write Code Like This"

Purpose: Ensures clean, maintainable, and efficient code.

Rules:

- Simplicity: "Always prioritize the simplest solution over complexity."

- No Duplication: "Avoid repeating code; reuse existing functionality when possible."

- Organization: "Keep files concise, under 200-300 lines; refactor as needed."

- Documentation: "After major components, write a brief summary in

/docs/[component].md(e.g.,login.md)."

Why It Works: Simple code reduces bugs; documentation provides a readable audit trail.

2. Technical Stack – "Use These Tools"

Purpose: Locks the AI to your preferred technologies.

- stack: NEXTjs, TS, tailwind, shadcn

- database: Use PostgreSQL with drizzle, using local docker connection string in development and production URL in production.

- testing: write unit tests using vitest to test isolated classes and functions.

Why It Works: Consistency prevents AI from switching tools mid-project.

3. Workflow Preferences – "Work This Way"

Purpose: Controls the AI’s execution process for predictability.

- Steps: "Break large tasks into stages; pause after each for my approval."

- Planning: "Before big changes, write a

plan.mdand await my confirmation." - Tracking: "Log completed work in

progress.mdand next steps inTODO.txt."

Why It Works: Incremental steps and logs keep the process transparent and manageable.

4. Communication Preferences – "Talk to Me Like This"

Purpose: Ensures clear, actionable feedback from the AI.

- Summaries: "After each component, summarize what’s done."

- Clarification: "If my request is unclear, ask me before proceeding."

Why It Works: You stay informed without needing to decipher AI intent.

LLM websites

ChatGPT

Canvas mode

Canvas mode is a way to edit some text, like an essay or code, by "pair coding" with chatGPT.

- You can highlight text in canvas mdoe and ask chatgpt to do something abotu that highlighted text, which is faster than simply retyping it.

Code execution

You can ask ChatGPT to execute code in a python repl to give you back exact mathematical answers or to create charts with matplotlib. Here are the things you can do:

- math: get back perfect math answers by asking in a repl

- graphs: ask for perfect graphs using matplotlib

- qr codes: ask to make qr codes from a link using the

qrcodepython package

Tasks

In the chatgpt pro plan, you can ask o3-mini model to create recurring tasks for you that get executed everyday and notify you via email.

For example, you could ask gpt to send you the latest ai news every morning

Microsoft copilot

Microsoft copilot is cool because it has AI sidebar integration in the edge browser to analyze the contents of a website.

NotebookLM

NotebookLM is really cool and has a great use case for generating minutes of audio on the fly.

- language use case: Use it to generate lessons and roadmaps of language learning content, and then create podcasts or voice lessons in your target language.

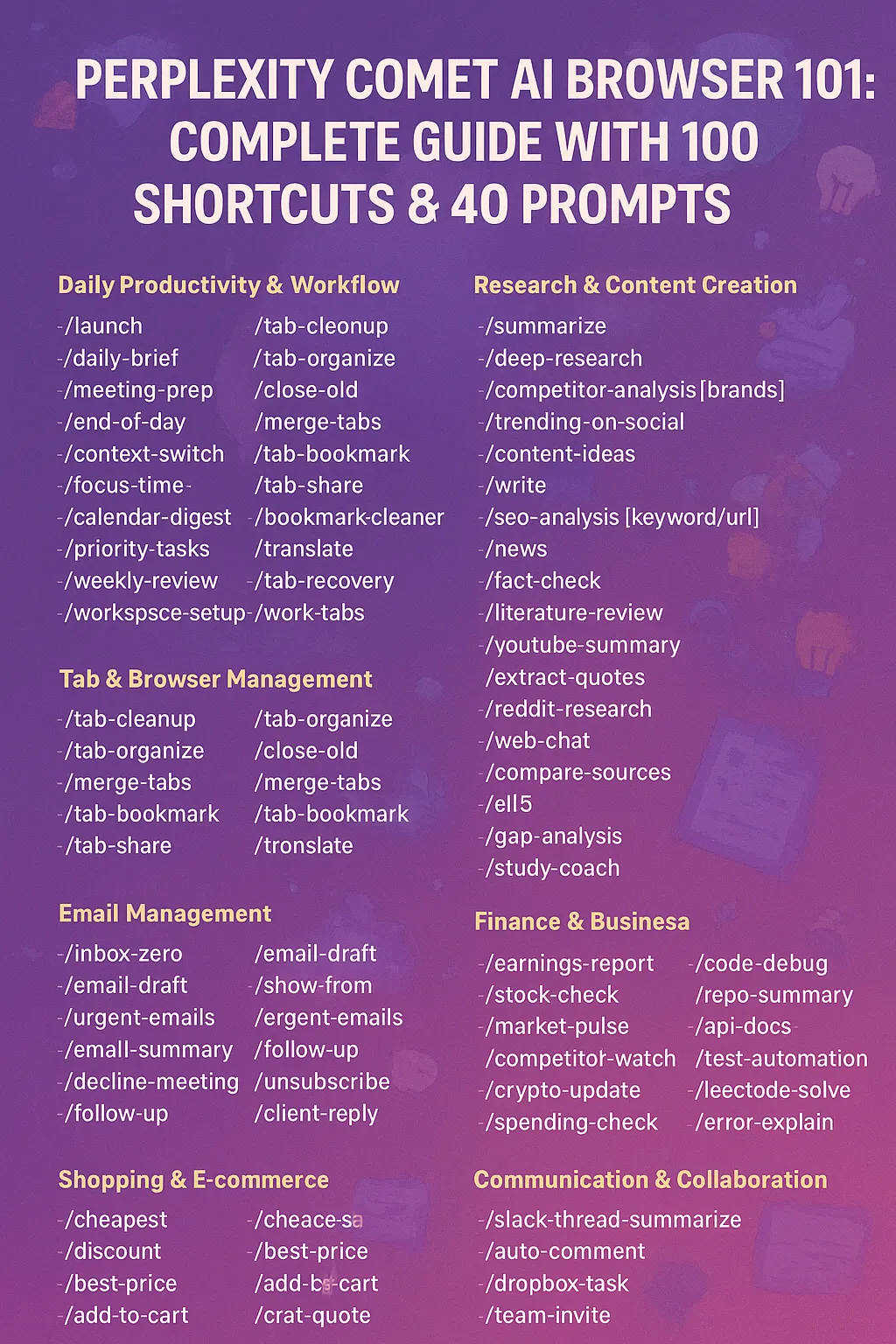

Perplexity/Comet

Shortcuts

In comet, you can register special slash commands that basically just copy and paste a predefined prompt, which is useful for saving keystrokes. Here are some good shortcuts

Context

- Use

@tabor@productpageto reference any open tabs.

Local LLMs

You can use local LLMs in a chat interface either with LMStudio desktop app or the Ollama CLI.

You can download quantized models off of hugging face or in LM Studio itself.

Theory

Quantization

quantization is the idea of precision in model parameters, either letting each parameter have a floating point precision (more precise) or an integer precision (less precise).

Although it sounds like being more precise would lead to better results - which it does - it also adds up more space to download local models and requires more RAM. To use a model for inference, it has to get loaded into memory, and even the smallest LLM has over 1 billion parameters. Higher precision leads to higher RAM requirements:

- A model with float32 quantization for parameters means each parameter is 32 bits, or 4 bytes, meaning a model with 2 billion parameters would need 8GB of RAM.

Thus quantization allows us to mathematically round the floating point precision parameters to integers, either int4 (4 bit) or int8 (8 bit) to cut down the RAM usage of a model:

- A model quantized with int4 quantization for parameters means each parameter is 4 bits, or 0.5 bytes, meaning a model with 2 billion parameters would only need 1GB of RAM.

TIP

quantization allows us to achieve up to 1/2 or 1/4 cutting of RAM usage, while still having only a negligible difference in performance from the more precise unquantized models.

Offloading

offloading is the technique of loading a model's parameters between CPU, GPU, and RAM, in order to efficiently load a model in memory.

A main drawback of offloading is that model performance becomes worse, even if memoyr use is more efficient.

Lm studio

CLI

lms ls: lists all downloaded modelslms ps: lists all currently loaded models in memorylms load <model-id>: loads a specific modellms unload <model-id>: unloads a specific model

listing models

Show all downloaded models using the lms ls command. You have 4 options to consider:

--llm: lists only llm models--json: lists info in JSON--detailed: lists details info--embeddings: prints only embedding models

lms ls

Example output:

You have 47 models, taking up 160.78 GB of disk space.

LLMs (Large Language Models) PARAMS ARCHITECTURE SIZE

lmstudio-community/meta-llama-3.1-8b-instruct 8B Llama 4.92 GB

hugging-quants/llama-3.2-1b-instruct 1B Llama 1.32 GB

mistral-7b-instruct-v0.3 Mistral 4.08 GB

zeta 7B Qwen2 4.09 GB

... (abbreviated in this example) ...

Embedding Models PARAMS ARCHITECTURE SIZE

text-embedding-nomic-embed-text-v1.5@q4_k_m Nomic BERT 84.11 MB

text-embedding-bge-small-en-v1.5 33M BERT 24.81 MB

List only LLM models:

lms ls --llm

List only embedding models:

lms ls --embedding

Get detailed information about models:

lms ls --detailed

Output in JSON format:

lms ls --json

You can show all currently loaded models with lms ps.

Get the list in machine-readable format:

lms ps --json

loading into memory

Load a model into memory by running the following command:

lms load <model_key>

You can find the model_key by first running lms ls to list your locally downloaded models. You also have access to these options:

unloading from memory

Unload a single model from memory by running:

lms unload <model_key>

If no model key is provided, you will be prompted to select from currently loaded models.

To unload all currently loaded models at once:

lms unload --all

Server CLI

You use the lms server start command to start the LM studio server

lms server start: starts server with default settings on port 1234lms server start --port <port>: starts server on specific portlms server start --cors: opens CORS for all web apps to access

You can use the lms server stop command to stop the LM studio server.

You use the lms server status command to see the status of the LM studio server

lms server start # start server

lms server status # get status

lms server stop # stop server

You also have these options:

Get the status in machine-readable JSON format:

lms server status --json --quiet

Example output:

{"running":true,"port":1234}

seeing logs

lms log stream allows you to inspect the exact input string that goes to the model.

lms log stream

Here would be the example output:

Programming

You can hit API endpoints for models that your load onto the LM studio server, which runs on localhost:1234.

There are three different ways to run the LMS studio server and hit up the endpoints:

- Basic rest API

- Open AI SDK (compatibility version)

- LM studio Python SDK

- LM studio TS SDK

Open AI Compatibility

Using models with LM studio is completely compatible with the openAI sdk. All you have to do is to pass the base_url parameter and point that to the LM studio server endpoint, like so:

from openai import OpenAI

client = OpenAI(

base_url="http://localhost:1234/v1", # LM Studio endpoint on port 1234

api_key="something-doesnt-matter", # doesn't matter, but should pass value

)

And here is an example showing just how simple and compatible the OpenAI SDK is to use with LM studio models

from openai import OpenAI

client = OpenAI(

base_url="http://localhost:1234/v1",

api_key="something-doesnt-matter",

)

response = client.chat.completions.create(

model="gemma-3-12b-it-qat",

messages=[

{

"role": "system",

"content": "You are a helpful and friendly assistant."

},

{

"role": "user",

"content": "What is the meaning of life?"

}

],

temperature=0.7,

)

print(response.choices[0].message.content)

LM studio TS sdk

fIrst install with this:

npm install @lmstudio/sdk --save

And here's a quickstart:

import { LMStudioClient } from "@lmstudio/sdk";

const client = new LMStudioClient();

const model = await client.llm.model("llama-3.2-1b-instruct");

const result = await model.respond("What is the meaning of life?");

console.info(result.content);

OLlama

OLLama is a CLI tool for installing and running local models. Here is an example that automatically installs and runs llama 3.2

ollama run llama3.2

In fact, here's a list of all CLI commands you can run:

rUnning models

When chatting with ollama models, you have access to these slash commands:

Available Commands:

/set Set session variables

/show Show model information

/load <model> Load a session or model

/save <model> Save your current session

/clear Clear session context

/bye Exit

/?, /help Help for a command

/? shortcuts Help for keyboard shortcuts

Since you have to chat using the CLI in a purely text based ways, there are a few caveats to keep iin mind when trying to chat with OLLama:

- multiline text: ANy multiline text needs to be encased in triple double quotes

- images: To refer to images or files, you just write out the relative path to that file in your prompt. Any filepaths you refer to MUST MUST MUST be at the end of your prompt, after any text.

- system message: run the

/set system <message>command to change the model's system message for the chat duration

saving chats

To save chats, you can use the /save <chat-name> and /load <chat-name> to load a chat. These commands save and load the chat respectively with the hyperparameters, chat history, and system message all set and saved.

/show command

Available Commands:

/show info Show details for this model

/show license Show model license

/show modelfile Show Modelfile for this model

/show parameters Show parameters for this model

/show system Show system message

/show template Show prompt template

If you run the /show system command, you can see the system message for the model.

/set command

>>> /set

Available Commands:

/set parameter ... Set a parameter

/set system <string> Set system message

/set history Enable history

/set nohistory Disable history

/set wordwrap Enable wordwrap

/set nowordwrap Disable wordwrap

/set format json Enable JSON mode

/set noformat Disable formatting

/set verbose Show LLM stats

/set quiet Disable LLM stats

/set think Enable thinking

/set nothink Disable thinking

/set system <message>: changes the model's system message for the chat duration/set parameter: shows the parameters of the model you can change

managing models

ollama list: lists all models you have installed.ollama ps: lists all currently running models.ollama rm <model-name>: deletes a model by its name.ollama show <model-name>: shows more info on the specified model.

TIP

You can find the parameters for a model on the ollama page for a model or through ollama show command.

Model env vars

These are the env vars you should set on a model in order to make OLLAMA more efficient:

Modelfiles

Modelfiles are essentially the Dockerfile version of creating LLMs, blueprinting them with system prompts, hyperparameter values, and message history.

Here are the directives you can use:

| Instruction | Description |

|---|---|

FROM (required) | Defines the base model to use. |

PARAMETER | Sets the parameters for how Ollama will run the model. |

TEMPLATE | The full prompt template to be sent to the model. |

SYSTEM | Specifies the system message that will be set in the template. |

ADAPTER | Defines the (Q)LoRA adapters to apply to the model. |

LICENSE | Specifies the legal license. |

MESSAGE | Specify message history. |

| Here is an example modelfile: |

FROM llama3.2

# sets the temperature to 1 [higher is more creative, lower is more coherent]

PARAMETER temperature 1

# sets the context window size to 4096, this controls how many tokens the LLM can use as context to generate the next token

PARAMETER num_ctx 4096

# sets a custom system message to specify the behavior of the chat assistant

SYSTEM You are Mario from super mario bros, acting as an assistant.

# adds message history

MESSAGE user "Hi mario, what's up?"

MESSAGE assistant "whats a up mamma mia you piece of shit!"

To use this:

- Save it as a file (e.g.

Modelfile) ollama create choose-a-model-name -f <location of the file e.g. ./Modelfile>ollama run choose-a-model-name- Start using the model!

# 1. create the modelfile and use it

ollama create <new-model-name> -f ./Modelfile

ollama run <new-model-name>

To view the Modelfile of a given model, use the ollama show --modelfile command.

Ollama server

Run ollama serve to start the server, but ollama runs on localhost:11434 automatically when you start it.

Ollama API

API fetching

open ai compatible

You can use the openAI compatibility API through setting the baseUrl property to localhost:11434/v1 endpoint.

vercel ai

Through the openAI compatibility endpoint, you can use ollama models on vercel AI.

import { createOpenAICompatible } from "npm:@ai-sdk/openai-compatible";

function get_ollama(modelName: string) {

const model = createOpenAICompatible({

name: "ollama",

baseURL: `http://localhost:11434/v1`,

apiKey: "1234567890",

});

return {

model: model(modelName),

modelOptions: {

maxRetries: 0,

},

};

},

python sdk

js sdk

The JS sdk is super easy to use through the ollama package:

import ollama from 'ollama'

const response = await ollama.chat({

model: 'llama3.1',

messages: [{ role: 'user', content: 'Why is the sky blue?' }],

})

console.log(response.message.content)

You can also stream messages:

import ollama from 'ollama'

const message = { role: 'user', content: 'Why is the sky blue?' }

const response = await ollama.chat({

model: 'llama3.1',

messages: [message],

stream: true,

})

for await (const part of response) {

process.stdout.write(part.message.content)

}

custom sdk

Made with the power of gemini

import { z } from "npm:zod";

// Base URL for the Ollama API

const OLLAMA_API_BASE_URL = "http://localhost:11434/api";

// Zod Schemas for API validation

const ModelDetailsSchema = z.object({

parent_model: z.string(),

format: z.string(),

family: z.string(),

families: z.array(z.string()).nullable(),

parameter_size: z.string(),

quantization_level: z.string(),

});

const ModelSchema = z.object({

name: z.string(),

model: z.string(),

modified_at: z.string(),

size: z.number(),

digest: z.string(),

details: ModelDetailsSchema,

});

const ListModelsResponseSchema = z.object({

models: z.array(ModelSchema),

});

const GenerateCompletionOptionsSchema = z

.object({

temperature: z.number().optional(),

seed: z.number().optional(),

top_k: z.number().optional(),

top_p: z.number().optional(),

min_p: z.number().optional(),

repeat_last_n: z.number().optional(),

repeat_penalty: z.number().optional(),

presence_penalty: z.number().optional(),

frequency_penalty: z.number().optional(),

stop: z.array(z.string()).optional(),

})

.partial();

const GenerateCompletionRequestSchema = z.object({

model: z.string(),

prompt: z.string(),

suffix: z.string().optional(),

images: z.array(z.string()).optional(),

think: z.boolean().optional(),

format: z.union([z.literal("json"), z.any()]).optional(),

options: GenerateCompletionOptionsSchema.optional(),

stream: z.boolean().optional(),

raw: z.boolean().optional(),

keep_alive: z.string().optional(),

});

const GenerateCompletionResponseSchema = z.object({

model: z.string(),

created_at: z.string(),

response: z.string(),

done: z.boolean(),

context: z.array(z.number()).optional(),

total_duration: z.number().optional(),

load_duration: z.number().optional(),

prompt_eval_count: z.number().optional(),

prompt_eval_duration: z.number().optional(),

eval_count: z.number().optional(),

eval_duration: z.number().optional(),

});

const MessageSchema = z.object({

role: z.enum(["system", "user", "assistant", "tool"]),

content: z.string(),

images: z.array(z.string()).optional(),

});

const GenerateChatRequestSchema = z.object({

model: z.string(),

messages: z.array(MessageSchema),

tools: z.array(z.any()).optional(),

think: z.boolean().optional(),

format: z.union([z.literal("json"), z.any()]).optional(),

options: GenerateCompletionOptionsSchema.optional(),

stream: z.boolean().optional(),

keep_alive: z.string().optional(),

});

const GenerateChatResponseSchema = z.object({

model: z.string(),

created_at: z.string(),

message: MessageSchema,